Creating Multiple-Choice Questions Using Revised Bloom's Taxonomy

Since formal education was introduced to the world hundreds of years ago, the testing process has been in a state of constant evolution. As eLearning developers on the forefront of a new digital education age, we are charged with finding the ideal way to insure that our learners have retained the information we have provided for them. One of the most effective methods of doing so is by offering multiple choice assessments and exams, which allow us to determine if our teaching methods or eLearning course design is doing its job (which is to provide the best possible eLearning experience). However, the question remains...what is the best technique to utilize when writing a multiple choice question? Bloom's Taxonomy may, very well, be the answer to that all-important eLearning question.

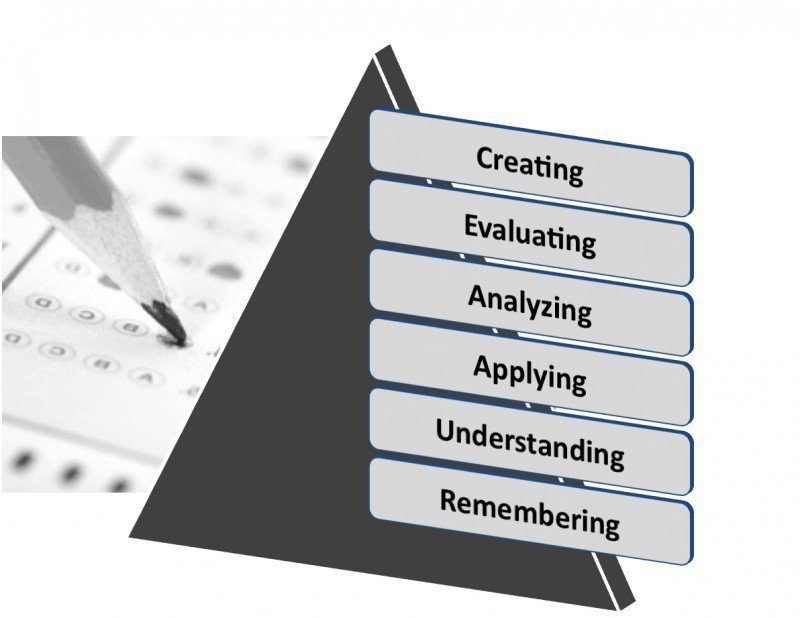

What Is The Revised Bloom's Taxonomy?

The revised Bloom's Taxonomy is based upon the cognitive objectives model that was developed in the 1950's by Benjamin Bloom. According to Bloom, there are six levels of cognitive behavior that can explain thinking skills and abilities used in the classroom (and in real life, for that matter):

- Creating

Encouraging an individual to look at things differently or to generate new concepts or ideas. In Bloom's original taxonomy model this was known as "synthesis". It requires that the student use designing or organizational skills. - Evaluating

This requires that learners have a reason behind the course of action that they took and involves experimentation and hypothesis. In this process, the student is asked to critique or summarize information. - Analyzing

In this process, learners will have to break down the data that was provided in order to fully grasp the content (as it is now in more manageable parts). This usually requires learners to use comparative and/or deconstruction skills. - Applying

This asks learners to use information that they already have gained, in order to solve a problem that may be similar in nature. This involves implementation of prior knowledge and skills. - Understanding

Requires that the learners explain the situation or process in order to show that they have understood the materials. This usually involves summarizing, paraphrasing or detailed descriptions. - Remembering

The learner's ability to retain and recall information. This usually comes in the form of recognition, retrieving, or listing.

According to the model, the first two categories are typically not ideal for multiple-choice tests, as they don't generally allow for predetermined answers (and are classified as "divergent thinking"). However, the last four cognitive abilities allow for more predictable, concrete answers (also known as "convergent thinking"). As such, they are perfect for eLearning tests that are designed around the multiple-choice structure. It's important to mention that you can also transform a higher level thinking "divergent" question into a "convergent" thinking multiple-choice question if you ensure that it has a concrete answer. For example, you could present the learner with a question that outlines the protocol involved in manufacturing a turbine engine. Then, ask them to describe the next step in the process that you have purposefully left out. This would force the learner to evaluate the process to see which step is missing, which would enable you to determine if they have a firm grasp of the process' summary, rules, and concepts.

5 Tips To Write A Multiple-Choice Test Based On The Revised Bloom's Taxonomy

One of the primary benefits associated with creating tests based upon this model is that the tests will not be unnecessarily difficult for the learner and are more effective in assessing the learner's knowledge of the subject matter. Not to mention that they are easier to correct and to modify. Here are 5 tips that you can use to write multiple-choice eLearning tests:

1. Always Use Plausible Incorrect Answers In The Questions

One of the biggest mistakes that eLearning test creators make is not making the incorrect answers convincing enough. You have to make them plausible so that you are able to test their ability to remember the information and apply it to the problem. This is the only true way to gauge if a learner fully understands the concept.

2. Integrate Charts Into The Exam

Include charts or graphs in your test, which will force the learner to use their analyzing skills. By having them interpret the data, they will be tested on whether or not they have really absorbed the information.

3. Transform The Verb

If you want to include a divergent thinking question on your test, you can generally accomplish this by turning it into a noun. For example, if you are trying to test your learner's ability to describe a scientific process, have them choose the best description for it. This can be used to test both their creating and evaluating skills.

4. Create Examples Or Stories To Test Their Understanding Abilities

Write out detailed stories or examples that the learner must read before answering corresponding multiple-choice questions. This will not only test their understanding ability, but their analyzing skills as well. You can also make the learner tap into their remembering or applying abilities if you create a story or example that asks them to draw upon knowledge they've already acquired.

5. Use Multilevel Thinking

These are the questions that include wording such as "the most appropriate" or "most important". Such questions serve to test the learners' judgment skills or understanding of an in-depth subject. For example, you could ask a learner a question about identifying a particular mental illness by first giving them a detailed explanation of a patient who exhibits a set of observed symptoms, then ask them to apply a particular psychological theory to come up with a diagnosis.

Create Meaningful Multiple-Choice Tests with the Best Authoring Tool!

Conclusion

Remember that many multiple-choice tests are works-in-progress. You must be willing to reassess them every now and then to ensure that they are still effective in determining a learner's knowledge retention and understanding to the subject matter. If need be, rework faulty questions that may be too easy or too difficult, and don't be averse to changing your instruction if you determine that that may be the root of the problem.

Bloom's Taxonomy isn't the only Instructional Design Model to consider. Find out everything about ID models in our ultimate guide Successful Instructional Design Models: Top ID Skills And Strategies For Newcomers. It's perfect for both beginners and professionals in the field who want to stay up-to-date on the latest ID skills and strategies. You'll find out what makes an ID model successful, steps in their design process, and other tips to help you choose the right one and apply it effectively. Also, you'll discover top ID skills and knowledge to make you a master. Secure your copy today and leverage this resource to design like a pro.

References:

- Aiken, Lewis R., (1982). Writing multiple-choice items to measure higher-order educational objectives. Educational and Psychological Measurement, 1982, Vol. 42, pp. 803-806.

- Bloom, B. S., Englehart, M. D., Furst, E. J., Hill, W. H., & Krathwohl, D. R. (1956). Taxonomy of educational objectives. The classification of educational goals: Handbook I. Cognitive domain.New York: David McKay.

- Bloom's Taxonomy, Retrieved from Wikipedia 01/22/2014, http://en.wikipedia.org/wiki/Bloom' s_Taxonomy

- Revised Bloom's Taxonomy, Retrieved from UTAR 01/22/2014, http://www.utar.edu.my/fegt/file/Revised_Blooms_Info.pdf

- Teaching with the Revised Bloom's Taxonomy, Retrieved from Northern Illinois University 01/22/2014, http://www.niu.edu/facdev/programs/handouts/blooms.shtml