Blended Learning Program: How To Measure Its Effectiveness

How do you know if blended learning is working in your organization? People and their skills represent strategic functions of the business. But the cost of your investment in human resources is also always a concern. We should consider how to measure the effectiveness of that investment. Measuring the impact of blended learning is no different from measuring the impact of learning generally, other than having a few more elements to measure. And we know that measuring the true impact of learning on an organization can be challenging. You are likely familiar with Kirkpatrick’s model [1] of the 4 levels of evaluation:

Blended Learning: A Proven Approach To Learning Development

The higher you go up the levels, the more time and resources required, but the better the information you obtain.

Level 1: Reaction

Training evaluation is usually easiest at the lowest level – the measurement of student reactions through simple surveys following a learning event. Though these surveys provide a window into how your learners are responding to the learning event, will they be enough to back you up when there is a need for a greater investment in training, when budgets are lean, or when there is a downturn in your associated markets? Probably not.

Level 2: Learning

To measure positive change at Level 2, we can give pre and post quizzes to assess if knowledge on a specific subject has increased. Well-designed learning usually includes ways for learners to demonstrate increased competency built into the program. Through practice exercises and knowledge checks, we can track some general measures of learning change, e.g., "Of the 217 participants to take the online course, 200 were able to pass the knowledge check at the end of the course the first time."

These metrics are helpful for making the case for learning, but are insufficient to argue for the value of learning to the organization. Learning does not necessarily equal improved performance.

Level 3: Behavior

At Level 3, we measure the application and implementation of learning – changed behaviors on the job. It is helpful to repeat these measures at intervals (e.g., 3 months, 6 months, 1 year) to ensure they have not diminished.

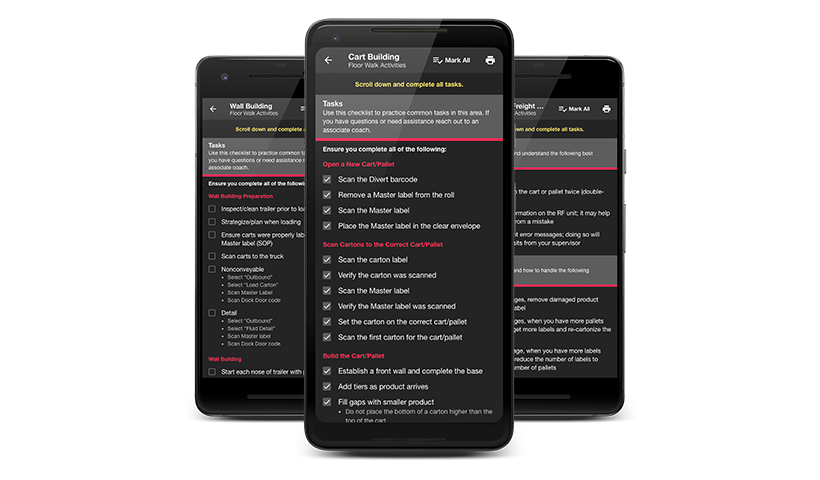

The best Level 3 assessments involve the evaluation of the behavior of the learner by others – a supervisor, mentor, or peer – for more objective assessment. This type of assessment might involve direct observation, an interview, a skills demonstration, drill or another form of skills test. It may be helpful to have a mobile app like to one shown here to track post-training assessments.

Level 4: Results

Does change in employee behavior result in measurable gains for the organization? Can you quantify the value of these behavior changes? Your metrics will be tied to your learning objectives. The learning objectives should roll up into an impact objective targeted at the organization as a whole. Is there hard data attached to the behaviors you’re teaching?

Data

- Units developed or built/specified time.

- Production or process on time – the number of jobs completed on time.

- Time – the length of time needed to complete a task.

- Equipment utilization – equipment is used to capacity and is used correctly.

- Costs savings, including time saved because mistakes were NOT made.

- Fewer customer complaints and returns.

- Reduced number of negative incidents – accidents, wasted materials, grievances, etc.

Benefits And Soft Skills

- Sometimes, the goal of training is the attitude change. Kirkpatrick & Kirkpatrick (2005) suggest that placing a dollar value on the benefits of training for non-skills-related topics is impossible. The fact is that improved soft skills can lead to improvement of measurable statistics such as increased productivity or lower levels of turnover.

- Don’t attempt to evaluate changes in soft skills immediately. Change often occurs right after a learning event, but over time old habits resurface; employees can cede to peer pressure or simply slip back into familiar ways of doing something.

- You should make assessments at 2-3 months out, and repeat at 6 months and even 1-2 years out.

For example, if your learning objective is to conduct an effective employee performance review, the organizational goal may be to successfully complete employee reviews by year-end or to create a more positive work environment and, thereby, reduce employee turnover.

Calculating ROI

Return On Investment (ROI) is a performance measure that calculates the relationship between the benefits of a program and its costs [Benefit-Cost Ratio (BCR)]. A BCR of greater than one indicates a successful offering, e.g., the benefits exceed the costs. When costs outweigh the benefits, however, a BCR of less than one is the result. In this case, you’ll need to consider changes or improvements to justify continued investment in the program.

An alternative, and equally useful, formula calculates ROI as a percentage return on the costs. This is perhaps more relatable for investors and market-based stakeholders.

The formula to calculate ROI in this way is:

ROI (%) = Benefit – Cost x 100 Cost

A program with a calculated result greater than 100% has a net benefit after accounting for costs. A ROI% = 120% indicates an offering that resulted in a 120 % return on money invested; i.e., the program generated $1.20 for every dollar that it cost.

A calculated result of less than 100% means that a program has led to a net cost. If this is the case, it might be worth seeing if there is an unidentified, perhaps social, benefit that is not easily measurable, such as an increase in employee morale or improved teamwork. If you see those types of improvements, you’ll need to determine whether the monetary loss is justifiable given the incurred costs.

A loss of 3% of several thousand dollars may be considered a justifiable cost to realize a happier workplace, but would you be willing to incur a cost of 3% of 5 million dollars? Probably not.

The issue remains fairly abstract when you think in terms of formulas, but to establish the ROI of an offering, you need to collect data. This is done through assessment and evaluation of the knowledge and skills gained and the behaviors that have changed. The "cost" is a bit easier to determine; determining the benefits of the training is more challenging and involves knowing how to evaluate the training program at Kirkpatrick’s Level 4.

The decision to invest in people reflects in the choice of training programs, and many decision-makers continue to seek the Holy Grail: programs that have visible, measurable impact and maximum overall return on investment. The adoption of assessment methodologies is thus imperative for businesses and training organizations.

References: