Defining ITS And AR

There is quite a bit of thrill recently about the above terms and, certainly using them together may generate confusing thoughts and futuristic Sci-Fi scenarios. Nevertheless, let’s start with the definitions of the two: Intelligent Tutoring Systems (ITSs) and Augmented Reality (AR).

The first term, ITS has a clear definition on Wikipedia as “a system that aims to provide immediate and customized instruction or feedback to the learners” [1] without human intervention. Looking a little bit deeper, the ITS has its roots in expert systems, an Artificial Intelligence-based system capable of making “expert” decisions based on processing the data accumulated using a set of rules. In fact, an ideal ITS would replicate the decision-making ability of a human expert in the field, and provide the ultimate tutoring experience to the learner, adapting to the learner knowledge during the tutoring process.

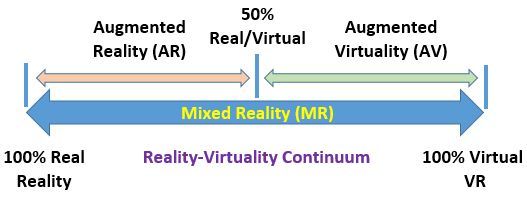

The second term Augmented Reality (AR) is mainly linked with the visual human system and describes the current technology’s capability to bring into our reality virtual objects that, ideally, blend perfectly into the real, hence “augment” with additional information our visual field. A clear picture of the AR place within the reality-virtuality continuum is depicted in Figure 1.

Figure 1. From Real to Virtual [2], spanning eXtended Reality (XR)

Figure 1 illustrates how the real view of a user may be enriched with virtual components and, as long as the virtual is less than 50% of the scene, we are in the AR domain. When employed in ITS, AR may provide a way to enhance content delivery by bringing virtual content to the users’ view.

Overview Of Existing ITS And AR Systems

Both ITS and AR are extensively mentioned in research literature as separate terms and there is relatively little work that combines both [3]. Each term implies a set of fairly sophisticated hardware and software components as well as advanced knowledge in several fields, from engineering to computing.

For an AR experience to be possible we must combine real and virtual components, we must associate (i.e. register/blend) the virtual components within the real scene and, last but not least, the resulting interface must be interactive (i.e. provide user feedback with minimal delay). For all this to be possible in an AR system we make use of 3 major technologies:

- Tracking technology: locating real-world objects (in the user’s viewpoint) and aligning the virtual objects based on the real-world system. Tracking systems range from simple techniques using computer vision and image processing to complex software/hardware systems involving markers and complex tracking technology (e.g. magnetic, acoustic, optic, etc.) [4]. Several inexpensive marker-based tracking software systems exist (e.g. ARKit [5], ARCore [6], ARToolkit [7]) and allow integration with a variety of game engines like Unreal [8] and Unity [9].

- Display technology: usually a Head-Worn Display (HWD) is used to provide the AR 3D visualization experience, even though a smartphone may provide similar capabilities displaying 3D content in 2D. Two major categories of displays exist: (a) video-see-through: the users see the real environment through a camera system and the virtual component is “augmented” onto the video-stream giving the impression that is part of the real scene and (b) optical see-through: the users see the real environment directly and a projective system adds the virtual component to the users’ viewpoint [10].

- Interaction technology: allows the user to interact with the virtual components as being real, tangible objects. Interaction can occur through hand gestures, pointing devices as well as thought tangible interfaces [11].

While AR systems are mainly concerned with content delivery, ITSs are targeted at generating higher learning gains. The effectiveness of ITSs has been evaluated through many domains including software engineering, mathematics, physics, genetics, etc.

ITSs could be classified as [12]:

- 1st generation ITS (early 1970): capable of answering questions, issuing feedback and rated about 25% as effective as human tutors.

- 2nd generation ITS (mid-late 1990): capable of providing multi-step learner feedback.

- 3rd generation ITS (late 1990 to mid-2000s): used to monitor and detect learner’s affective states to determine possible distractions.

- 4th generation ITS (mid-2000s to late 2000s): more advanced systems that facilitate deep learning by employing a mixed-initiative design [13].

- 5th generation ITS (early 2010 to mid-2010s): capable of getting information from the real-world outside the ITS [14].

- 6th generation ITS (late 2010 to present): systems still under development, capable to draw information about the learner form large datasets (using various data mining techniques) and generating intelligent dialogue [15]. The gamification paradigm [16] is introduced as a potential enhancement of such systems and advanced AR visualization is explored.

Looking Into The Crystal Globe: Intelligent Augmented Reality Tutoring Systems

The reasons we would like to integrate ITS with AR are: enhanced learning experiences, enhanced mental model development, increased usability and a wider communication bandwidth, since 3D, as well as audio content, may be delivered simultaneously.

Designing, implementing and deploying ITSs that use the AR paradigm pose many software and hardware technical challenges and require expertise in several domains. A handful of researchers have embarked on this mission, developing Intelligent Augmented Reality Tutoring Systems (IARTSs) to enhance the learning experience for complex assembly tasks [17], to guide the learning of complex medical operations [18] or to train soldiers for military operations in urban terrain [19].

As experiential learning theory [20] proves, learning involves interacting with the environment, playing with things in the environment, and establishing new mental models. IARTSs provide the means to interact with the environment using virtual augmentations and displaying information when/where it is needed. While the development of IARTSs is a complex task, with the advent of new and improved visualization and data processing technology, we are guaranteed to see new generations of IARTSs spawn in various domains from the medical field training to industrial workforce re/training.

References:

[1] Intelligent Tutoring Systems. (2019). Online at: https://en.wikipedia.org/wiki/Intelligent_tutoring_system

[2] Milgram P, Takemura H, Utsumi A, Kishino F. (1995). “Augmented reality: a class of displays on the reality-virtuality continuum”. In: Das H, editor. Proceedings of SPIE, 2351. The International Society for Optical Engineering, pp. 282-292.

[3] Herbert B, Ens B, Weerasinghe A, Billinghurst M, Wigley G. (2018). “Design Considerations for Combining Augmented Reality with Intelligent Tutors”. Computers and Graphics.

[4] Davis L, Hamza-Lup F. G, Rolland J. P. (2004) “A Method for Designing Marker-Based Tracking Probes,” IEEE and ACM International Symposium on Mixed and Augmented Reality (ISMAR), pp.120-129, 2–5 November, Arlington, Virginia. https://doi.org/10.1109/ISMAR.2004.5.

[5] Apple Inc. ARKit. Online at: https://developer.apple.com/augmented-reality/.

[6] Google Inc. ARCore overview | ARCore. 2019. Online at: https://developers.google.com/ar/.

[7] ARToolkit. 2019. ARToolkit. Online at: http://www.hitl.washington.edu/artoolkit/.

[8] Unreal. 2019. Online at: https://www.unrealengine.com/en-US/vr.

[9] Unity. 2019. Online at: https://unity.com/solutions/xr.

[10] Rolland J.P, Biocca F, Hamza-Lup F. G, Ha Y, Martins R. (2005) “Development of Head-Mounted Projection Displays for Distributed, Collaborative, Augmented Reality Applications,” Special Issue: Immersive Projection Technology, MIT Presence, Vol. 14(5), pp. 528-49. https://doi.org/10.1162/105474605774918741.

[11] Billinghurst M. (2013) “Hands and Speech in Space: Multimodal Interaction with Augmented Reality Interfaces”. In: Proceedings of the 15th International Conference on Multimodal Interaction, New York, NY, pp. 379–80.

[12] Corbett A, Anderson J, Graesser A, Koedinger K, Van Lehn K. (1999) “Third Generation Computer Tutors: Learn from or Ignore Human Tutors?”. In: Proceedings of the CHI ’99 Extended Abstracts on Human Factors in Computing Systems, New York, NY, pp. 85-86.

[13] Graesser A.C, Van Lehn K, Rose C.P, Jordan P.W, Harter D. (2001) “Intelligent Tutoring Systems with Conversational Dialogue”. Artificial Intelligence Magazine, Vol.22(4):39.

[14] De Jong C.J. (2016) “Embedding an Intelligent Tutor into Existing Business Software to Provide On-the-job Training”. Christchurch, New Zealand: University of Canterbury.

[15] Hamza-Lup F.G. and Goldbach I.R. (2019) “Survey on Intelligent Dialogue in e-Learning Systems,” International Conference on Mobile, Hybrid, and On-line Learning, pp.49-52, 24–28 February, Athens, Greece. Online at: https://thinkmind.org/index.php?view=article&articleid=elml_2019_3_10_50033.

[16] Stanescu I, Goldbach I.R, Hamza-Lup F.G. 2019. “Exploring the Use of Gamified Systems in Training and Work Environments”. Proceedings of eLearning and Software for Education (eLSE), pp.11-19, Bucharest, Romania, https://doi.org/10.12753/2066-026X-19-001.

[17] Westerfield G, Mitrovic A, Billinghurst M. (2015) “Intelligent Augmented Reality Training for Motherboard Assembly”. In: International Journal of Artificial Intelligence in Education, Vol. 25 (1), pp. 157-172.

[18] Almiyad M.A, Oakden-Rayner L, Weerasinghe A, Billinghurst M. (2017) “Intelligent Augmented Reality Tutoring for Physical Tasks with Medical Professionals”. In: Artificial Intelligence in Education. Springer, pp. 450-454.

[19] LaViola J, Williamson B, Brooks C, Veazanchin S, Sottilare R, Garrity P. (2015). “Using Augmented Reality to Tutor Military Tasks in the Wild”. Interservice, Industry Training, Simulation, and Education Conference 2015.

[20] Experiential Learning (2019). Available online at: https://en.wikipedia.org/wiki/Experiential_learning.