Assessing Employee Skills For Effective Training

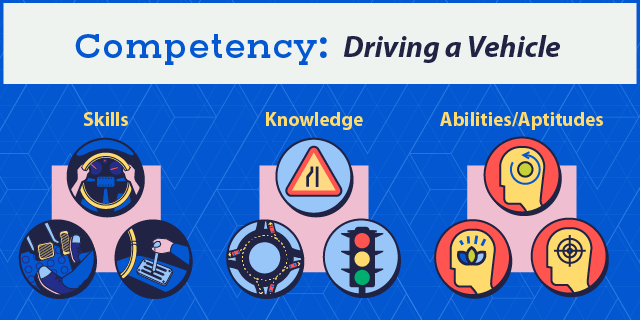

Let’s take a moment to clarify the relationship between a competency and a skill. A skill is tactical or task-related. A competency is a collection of skills plus knowledge plus ability/aptitude, and the integration of all these into observable behaviors. For example:

- Competency

Driving a vehicle - Skill

Physically operating the vehicle - Knowledge

Traffic laws - Ability/Aptitude

Calm, ability to focus

Skills are learned more quickly than competencies because competencies require using multiple skills together. Competencies are assessed by level, from beginner to mastery. Anyone who has tried to learn—or teach someone—to drive a car can attest to the marked difference between a beginner and an experienced, expert driver. Moreover, driving a typical four-door car is very different from driving a limousine or an eighteen-wheeler. The competency of driving a vehicle has levels, depending on the business needs the competency supports.

Competency-Based Learning: Increase Employee Skills Development Through Competency-Based Learning

Competency-based learning means teaching competencies, not just individual skills. Optimally, training includes practicing integrating and applying skills and knowledge to demonstrate mastery. Assessing skills is one particular part of assessing competency.

Competency-based learning design is role-centered instead of content-oriented. The skill learning objectives for a course in turn map to a competency that is mapped to one or more roles.

For example, when a new software system is implemented, training will be mapped to employee roles to address the competencies each role requires. A sales clerk will learn how to process sales orders. A manager will learn how to run reports to analyze expenditures. (In contrast, if training were organized around the software, all employees might sit through training on the functionality of the entire system, including the parts they will never use—a waste of employee time, and a quick way to reduce both motivation and retention.)

Just like content, the most useful assessments are tailored to job requirements and learning objectives matched to the learner’s role.

What Kind Of Objective Are You Testing?

Competencies encompass skills, knowledge, aptitudes, and their integration. To get useful, accurate, reliable test results, you have to use the right kind of test for each. A common assessment mistake is using a knowledge test method for a skill objective.

| Learning objective type | Typical verb | Content examples |

| Knowledge | Remember, list, discuss, select, explain | Facts, theories, other conceptual knowledge |

| Skill | Make (create, assemble, build, construct), perform, apply, analyze, troubleshoot | Job task, procedure, process, scenario |

Knowledge objective examples:

- List the components of a motor.

- Explain two common challenges of treaty law in international waters.

Skill objectives examples:

- Apply good communication principles to understand a sales prospect’s needs.

- Perform a lockdown/tag out procedure.

- Locate and troubleshoot a leak in a drainage system.

- Analyze information to recommend a course of action.

True/false, multiple-choice, and matching questions can be good choices for knowledge testing. They are easy to program in online training and easy for a live instructor to grade. That also makes them tempting to overuse. When the objective is to demonstrate the ability to perform a task, even the best multiple-choice questions are inadequate if deployed alone.

Assessing Aptitudes

An aptitude is a natural ability to do something. Examples include verbal and mechanical reasoning, spatial awareness, and error checking. Whereas a resume conveys past achievements, aptitudes can be seen as areas of greatest potential, even if they have not yet been fully developed. Aptitudes can be honed, but not created out of nothing. A person with no head for numbers will have a long row to hoe as a bookkeeper. A lawyer with good spatial awareness will have an advantage in patent law for three-dimensional objects.

These kinds of aptitudes are often measured with standardized tests as part of the hiring process. Examples include:

- Differential Aptitude Test (DAT)

- OASIS-3 Aptitude Survey

- Bennett Mechanical Comprehension Test (BMCT)

For more information on any tests you are considering using, Buros Center for Testing is an independent non-profit that provides detailed information and critique of commercially available tests [1].

Companies like SHL, Kenexa, Cubiks, TalentQ, and Capp provide such testing services so you don’t have to reinvent the wheel.

However, standard aptitude tests may not address all the aptitude requirements for competency, especially affective ability requirements like staying calm under pressure or ability to focus. Affective performance is commonly evaluated in task-based tests, as are skills.

Assessing Skill Objectives With Task-Based Tests

In a task-based test, employees actually perform a real job task in a real work environment or a realistic simulation of it. Task-based testing is a form of scenario-based learning. In full scenario-based learning, employees get to choose their actions, observe the consequences, and learn from mistakes in a safe, constructive environment that relates directly to their work environment. This allows for deeper, more memorable learning that they can apply directly back on the job. You can use scenario-based testing at both the skill level and competency level, as well as for affective requirements.

For cognitive skills such as analyzing information and formulating recommendations, provide learners the same type of source information and briefing they would receive on the job. You can assess their skill with a written exam. Better, have them submit their analysis or recommendations in the same form they would on the job—as a presentation or report. A supervisor, more experienced peer, or instructor can evaluate and provide feedback.

Scenario-based learning is especially useful for complex skills like customer service, problem-solving, and complex analysis where it’s hard to isolate a single skill from related and supporting skills, knowledge, and aptitudes/abilities.

Pro-Tip

In a scenario-based eLearning simulation, the assessment and feedback will likely be coded as part of the module and recorded automatically in an LMS. In real life simulations, a supervisor or knowledgeable peer usually evaluates the learner’s performance. To keep evaluations objective, consistent, and fair across locations and evaluators, it’s critical that all evaluators (and learners) know the criteria for successful performance. Support for evaluators and learners includes clear descriptions of the task, checklists, and/or some form of rating scale that quantifies the skill level demonstrated.

Self-Assessments Help Learners Help Themselves

Assessments are useful not just for trainers and managers. Giving learners the chance to self-assess as they go through training can increase engagement, allow them to pause and address whatever gaps they find, and get a fuller, sounder mastery of the course.

Pro-Tip

Self-assessments are much more useful to learners if you offer resources to immediately address any gaps they discover. Microlearning, articles, videos, websites, forums, mentoring, and other online training courses are all good candidates for targeted learning to shore up skills gaps when they are high on the learner’s mind and priority list.

Real-World Context Is Key

To test skills in the most useful and valid manner, assessments must mimic real job environments. That means not just making the content familiar and relevant to the learner’s job role. (A salesperson should demonstrate interview skills by interviewing a prospective customer, not a prospective new hire.) It also means providing:

- The same resources as on the job

Give them access to the same equipment, tools, and reference materials they would normally have to help them complete the task. Finding the correct instructions might be one of the skills you’re testing, as well as whether they can complete the main task successfully with that support. - The same challenges as on the job

If they would normally have to complete a repair task on a boat deck in heavy weather, then testing their skill in a well-lit onshore warehouse is a good start, but it’s not a full test.

Key Takeaways

Skills assessment boils down to evaluating how an employee actually performs the skill, which can look like:

- Real-world performance, evaluated by a live instructor.

- Scenario-based performance, evaluated by a computer system or live instructor.

These are expanded by options such as:

- Interviews

- Roleplaying

- Written tests

- Self-assessments

- Live face-to-face scenarios (e.g., war games)

- Interactive online scenarios, including online team games

Assessments are most useful, valid, and reliable when they are mapped to job roles and mimic the work environment. Download the eBook Competency-Based Learning: Increase Employee Skills Development Through Competency-Based Learning to discover more about this highly-strategic approach to employee skills development.

References: